Join Us on Apr 30: Unveiling Parasoft C/C++test CT for Continuous Testing & Compliance Excellence | Register Now

Jump to Section

API ROI: Maximize the ROI of Automated API Testing Solutions

The goal of automation in API testing is to get rid of repetitive tasks. Parasoft's AI-enhanced API testing solutions automate the validation of your API tests for a good return on investment. Check out this post to see how.

Jump to Section

Jump to Section

APIs and the “API economy” are currently experiencing an increase in awareness and interest from developers and industry experts alike, but it’s not always getting extended to API software testing.

If we want to ensure that the business-critical APIs, which are so important to our organizations, are truly secure, reliable, and scalable, it’s time to start prioritizing API testing. After all, API testing tools can provide some very valuable benefits.

- Reduced development and testing costs

- Reduced risks

- Improved efficiency

API Testing Tools Reduce Development and Testing Costs

API testing tools drive cost reduction through:

- Reducing testing costs.

- Lowering the amount of technical debt you’re accruing.

- Helping you eliminate defects when it’s easier, faster, and cheaper to do so.

Reduce Testing Costs

Without an API testing solution, an organization’s API testing efforts predominantly involve manual testing plus limited automation fueled by home-grown scripts or tools and a motley assortment of open source or COTS testing tools. Having an integrated API testing solution dramatically reduces the resources required to define, update, and execute the prescribed test plan. It also enables less experienced, less technical resources to perform complex testing.

Teams can decrease testing costs by:

- Reducing outsourced (consultants/contractors) testing costs.

- Reducing internal testing costs.

Reduce Technical Debt

Technical debt refers to the eventual costs incurred when software is allowed to be poorly designed. For example, assume an organization failed to validate the performance of certain key application functionality before publishing its API. A year after deployment, API adoption skyrocketed, and performance began to suffer. After diagnosing the issue, the organization learned that inefficiencies in the underlying architecture caused the problem.

The result? What could have been a two-week development task snowballed into a four-month fiasco that stunted the development of competitive differentiators.

API testing exposes poor design and vulnerabilities that will trigger reliability, security, and performance problems when the API is released. This helps organizations:

- Reduce the cost of application changes.

- Increase revenues through quick responses to new opportunities and changing demands.

Remediate Earlier

The unfortunate reality of modern application development is that applications are all too often deployed with minimal testing and “quality assurance” is relegated to end users finding and reporting defects they encountered in production. In such situations, early remediation has the potential to reduce customer support costs as well as promote customer loyalty, which has become critical to the business now that switching costs are at an all-time low.

The earlier a defect is detected, the faster, easier, and cheaper it is to fix. For example, let’s return to the scalability problem in the previous section. Imagine a developer noticing and fixing a performance testing issue during development versus a key customer reporting it in the field.

In one case, the developer modifies the code during the allotted development time period and checks the code in as part of his development task. On the other, an organization’s account management team, support team, and subject matter experts end up documenting the issue from the client’s perspective, and then product management and development are engaged to provide a fix to a customer emergency.

Numerous models have estimated that the cost difference between preventing a defect and finding and fixing a defect in production is, at a minimum, 30X. The benefits of earlier remediation enable teams to:

- Reduce the cost of finding and fixing defects.

- Reduce customer support costs associated with defects reaching production.

API Testing Tools Reduce Risks

In the vast majority of development projects, schedule overruns or last-minute “feature creep” result in software testing being significantly shortchanged or downgraded to a handful of verification tasks. Since testing is a downstream process, the cycle time allotted for testing activities is drastically curtailed when timelines of upstream processes are stretched.

API testing solutions enable a greater volume, range, and scope of tests to be defined and executed in a limited amount of time. As a result, QA teams are much better equipped to complete the expected testing within compressed testing cycles.

With compressed timeframes and manual test environments, organizations are compelled to make trade-offs around testing. These conditions lead to “happy path” testing: testing only the simplest intended use case.

Given the complexity of today’s modern systems, this happy path is hardly sufficient to ensure integrity. Automating API testing provides organizations with the sophisticated tools required to move beyond happy path testing. This approach enables organizations to exercise a broader range of test conditions and scenarios that simply would not be feasible under manual testing conditions.

Automating API testing is not only faster and more accurate at identifying defects than manual testing. It is also able to expose entire categories of risks that evade traditional manual testing efforts. For example, API testing solutions can automatically simulate a daunting array of security attacks, check whether the back end of the application is behaving properly as test scenarios execute, and validate that interoperability standards and best practices are being followed. Such tasks are commonly neglected since they are inherently unsuitable for manual testing.

The risk of change is also reduced with an automated API testing solution. The freedom to confidently evolve the application in response to business needs is predicated on the ability to detect when such changes unintentionally modify or break existing functionality. Identifying such problems before they reach production requires continuous execution of a comprehensive test suite. However, it’s highly unfeasible to manually execute a broad set of test scenarios each time an application is updated (often daily), examine the results, and determine if anything changed at any layer of the complex distributed system. With automation, such testing is effortless.

Risk reduction results achieved with API testing tools include:

- Define and execute more tests in a shorter period of time.

- Leverage the test infrastructure to increase the breadth and scope of testing.

- Decrease the number and severity of defects passed on to customers.

- Decrease the time between defect origination and discovery.

- Decrease the time between defect discovery and resolution.

API Testing Tools Increase Efficiency

Automated API testing has a staggering impact on productivity. API testing tools promote a “building block” approach to testing that means QA, performance testers, and security testers never have to start from scratch. They begin with an automatically generated foundational set of functional test cases, then rearrange and extend the components to suit more sophisticated business-driven test scenarios. These tests can then be leveraged for security testing as well as load testing. When team members are all building upon one another’s work rather than constantly reinventing the wheel, each can focus on completing the value-added tasks that are his or her specialty.

Given the complexity of how APIs are leveraged in today’s modern applications, defining and executing a test scenario that addresses the various technologies, protocols, and layers involved in a single business transaction is challenging enough. Checking that everything is operating as expected across the disparate distributed components—each and every time that test scenario is executed—is simply insurmountable without proper automation.

Exacerbating the situation is the fact that business applications are now evolving at a staggering speed. As a result, test assets rapidly become worthless if they’re not kept in sync with the evolving application. API testing solutions can automate 95% of key test definition, execution, and maintenance tasks, yielding tremendous efficiency gains.

Efficiency gains associated with an API testing tool can include:

- Increase the number of test cycles a QA team can complete in a given timeframe.

- Increase the number and variety of tests performed in a given timeframe.

- Reduce time spent manually executing tests prior to UAT.

- Reduce time to market resulting from faster test cycles.

API Testing for Improved Return on Investment

Having discussed how API testing can lead to reduced costs and risks, how can your team maximize the return on investment (ROI) using Parasoft’s automated API testing solutions? Before we dive in, let’s highlight the key features of a quality API testing tool that help to maximize ROI.

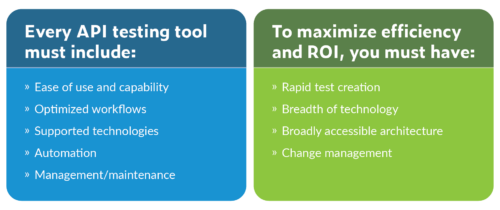

Automated API Testing Tool Must-Haves

As you evaluate functional test automation solutions, there are five key areas that you should be able to check off in order to find the best API testing tools. There are also some must-haves to maximize ROI.

Let’s dig into the details of how the Parasoft automated API testing solution guarantees ROI.

Rapid Test Creation

The number of APIs in modern applications is exploding and becoming more and more difficult to manage, let alone test. Most applications have a combination of known public interfaces and undocumented APIs that fly under the radar of testers. The best way to deal with this scenario is to observe the application during testing to see all the traffic and interfaces used at runtime.

Parasoft SOAtest includes the smart API test generator, which uses a web browser extension to capture all the traffic between the UI and the frontend services. Using artificial intelligence (AI), it deduces the data relationships in the API traffic and creates a test scenario template. SOAtest lets testers manipulate these templates to create test suites rapidly and easily.

By leveraging existing UI tests, teams can create a suite of API tests. These tests can be expanded upon to build functional and nonfunctional test suites, while still integrating all test results and metrics with the other realms of testing: unit, API, UI, and other manual tests.

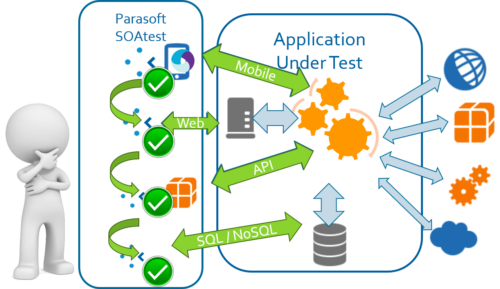

Breadth of Technology

The future of enterprise architecture is evolving. Trends like the Internet of things (IoT) and microservices have expanded the landscape. Testing tools must be resilient to these changes and support current and future communication mechanisms in applications.

Parasoft SOAtest supports a wide variety of legacy and current communication protocols, including support for IoT and microservices. More than that, it supports testing and results from what might not be considered APIs at all, such as web, mobile, and direct database access. If it’s not supported now, you can easily customize the tool to include new protocols.

More importantly, all testing results in the Parasoft suite of tools are stored in a common place and correlated by component, build, requirement, and test/test suite. Tests aren’t limited to what’s in the box.

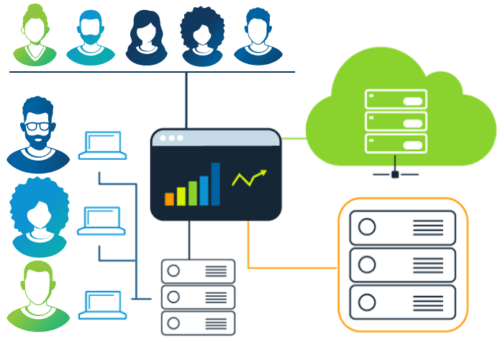

Accessible Architecture

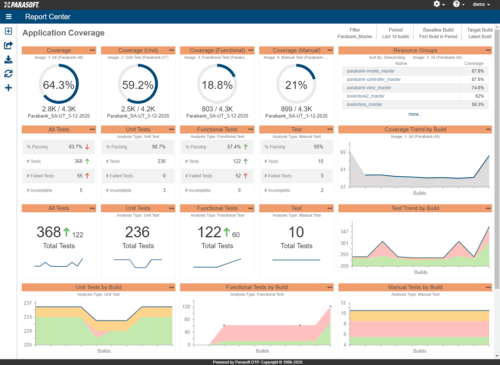

For an automated API testing tool to increase productivity, it needs to be in the hands of many. Developers, testers, managers, and whomever else should have access to the test information and results as needed. Detailed test settings aren’t of interest to managers, but metrics such as code coverage, API coverage, requirement coverage, and the current state of the test suite do matter.

For all the ad hoc users, Parasoft SOAtest provides a thin client to access test cases created and data captured during recording. The Parasoft DTP reporting and analytics platform collects this data in a central repository and provides various views into the data depending on role and needs.

Accessibility means more than user interfaces. Tools must scale with the projects and organization and integrate into the processes already in place. Parasoft SOAtest is available on the desktop and browser for immediate use and integrates with automation pipelines to run test suites offline. As the project scales, the API and web service testing tool is designed to handle growing test suites and code bases. Helping teams manage change and growth is an important aspect.

Change Management

Constant change is the reality of modern enterprise software development. It can be a constantly moving target for everything from product requirements to security and privacy challenges to brittle architectures and legacy code.

Software organizations are dealing with this to some extent by adopting Agile processes and continuous integrations and deployment pipelines for a more iterative and incremental approach to all aspects of the software development life cycle.

Any software automation tool integrating into modern “software factories” needs to help teams manage change, not only of the tool’s own artifacts but also to reduce the burden of change in general.

Typical projects require two to six weeks of testing and test refactoring for each new version of a product. These large test cycles are delaying application release schedules without improving quality and security outcomes.

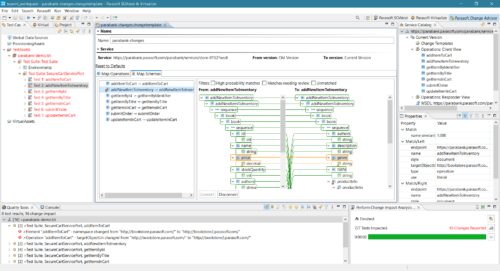

Parasoft SOAtest has native support for version control systems for test suite artifacts so testers can manage change at the API test level using their existing version control systems. Testers can visually inspect the differences between API tests with the usual ability to check in and check out from the repository.

There’s more to managing change than the tool’s artifacts. Parasoft testing tools work in conjunction with the development testing platform that collects and analyzes data from all levels of testing. This analysis is displayed as various dashboards that allow developers, testers, and managers to understand the impact of change on their code and tests.

Understanding the impact of code changes is not limited to what to test but also involves what tests are missing and what tests need updating. Code changes don’t always require rerunning tests. They often need new tests and impact other components and their tests. Understanding the full impact of each change is critical to validating the new code changes and to the stability of the rest of the application.

By focusing testing on where it’s needed the most, teams can eliminate extraneous testing and guesswork. This reduces the cost of testing and improves test outcomes with better tests, more coverage, and streamlined test execution. Parasoft performs this analysis on each test that’s run, including manual, API, and UI test results, not just for test pass/fail, but also their coverage impact on the codebase. As code is changed, the impact is clearly visible on the underlying record, highlighting tests that now fail or code that is now untested.

Summary

To maximize the ROI benefits of API test automation, it’s clear that a tool needs to be usable and accessible by the entire team and provide quick and easy ways for testers to build adaptable and resilient test suites.

Just as important is how the testing tools help teams manage changes in code, requirements, and technology. Parasoft SOAtest helps teams realize the promise of API test automation while improving their testing outcomes. And reduces those lengthy test cycles to accelerate project deliveries.