Join Us on Apr 30: Unveiling Parasoft C/C++test CT for Continuous Testing & Compliance Excellence | Register Now

Jump to Section

Add Static Analysis to Your Security Testing Toolbox

There are many ways and techniques for detecting vulnerabilities in your software. One of the best ways is static analysis security testing (SAST). Here is how you can go about deploying SAST in software security testing.

Jump to Section

Jump to Section

There are several techniques to identify vulnerabilities in software and systems. Smart organizations keep them in their “security toolbox” and use a combination of testing tools including:

- Static analysis security testing (SAST)

- Dynamic analysis security testing (DAST)

- Source composition analysis (SCA)

- Vulnerability scanners

- Penetration testing

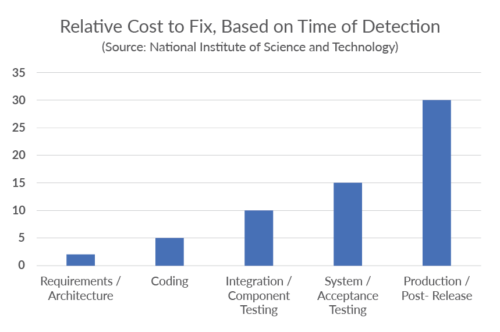

The motivation to improve security through automated tools is to shift left in the software development life cycle (SDLC) the identification and remediation of vulnerabilities as early as possible. Fixes and remediation get more complicated as the application nears release. Figure 1 shows how the cost of remediating vulnerabilities increases dramatically as the SDLC progresses.

For in-depth coverage of the economics of software security, check out The Business Value of Secure Software whitepaper. This post focuses on using static analysis security testing as part of an organization’s security practice.

Static vs. Dynamic Security Testing: What’s the Difference?

As the name suggests, static testing means it’s applied statically. In other words, the analysis is performed on source code, binaries, and/or configuration files. Static tools use their understanding of the semantics of the source to deduce errors and vulnerabilities.

These “error detectors” are known as checkers or rules. If a rule is violated, a warning is issued with information on where in the code the violation took place and usually trace information to track down the root cause.

Dynamic testing applies to running applications. These tools detect problems in running applications and perform testing by adding small bits of code called instrumentation to help determine code coverage and answer the question, have I done enough testing?

Errors are reported as the application runs and usually provide context information so developers can find and fix the detected vulnerabilities.

The main differences between static and dynamic security testing tools include the following.

- Usage in the SDLC. Static tools like SAST are used early during development and can be applied to code as it’s written by developers. A running application isn’t required to get meaningful results from SAST tools. This early detection is very useful in reducing the impact of vulnerabilities later in development and testing. SAST tools are also used to enforce secure coding guidelines, which help prevent vulnerabilities in the first place.

Dynamic tools like DAST tools can only be applied to running applications and therefore come later in development. However, with modern CI/CD pipelines, running applications are available sooner and more often than before, making DAST tools more useful.

- Technology. As mentioned, static tools work by analyzing source code and have sophisticated algorithms for detecting vulnerabilities. These tools behave similar to compilers and analyze code during builds, but they are also available in IDEs for local use by developers. A large part of the SAST tools is the reporting and management of warnings.

Dynamic tools, on the other hand, can incorporate test frameworks to automate test case generation, stubbing, mocking, take advantage of instrumentation, and use special runtime libraries to detect vulnerabilities while the application is running. They also include capabilities to capture and report on these findings. Trace information is usually included, which makes for quick remediation. Since these errors are actually occurring in a running application, there are usually no false positives.

- Scope. The scope of SAST tools includes the entire code base, which means detection of vulnerabilities in code that isn’t executed during “normal” operation of the application. Using flow analysis, it’s possible for SAST tools to explore branches of code, such as error conditions, that aren’t triggered during testing. It’s possible that vulnerabilities lie in these untested pieces of code.

DAST tools, on the other hand, detect vulnerabilities in executing code. There’s a high degree of confidence in these findings. In addition, runtime conditions, such as complicated multithreaded behavior, introduce new types of errors and vulnerabilities that SAST tools miss.

- Limitations. Both DAST and SAST tools have limitations. DAST tools require a running application and the scope of errors that they can detect is often limited. However, when DAST tools detect vulnerabilities, they’re high risk and worthy of an immediate fix. There are few falsely reported errors, known as false positives, in SAST tools.

SAST tools have to trade off false positives with potentially missing real vulnerabilities, known as false negatives, in order to provide the best ROI for securing source code. False positives are always possible with SAST tool analysis, but the payoff is early detection of vulnerabilities that might be missed in later testing.

Both SAST and DAST tools can miss real errors. However, best practice is to use both tools together to reduce errors.

Static Analysis Security Testing

SAST tools do not require a running application and therefore can be used early in the development lifecycle where remediation costs are low. At its most basic level, SAST works by analyzing source code and checking it against a set of rules. Usually associated with identifying vulnerabilities, SAST tools provide early alerts to developers regarding poor coding patterns that lead to exploits, violations of secure coding policies, or a lack of conformance with engineering standards that will lead to unstable or unreliable functionality.

There are two primary types of analysis used for identifying security issues.

- Flow analysis

- Pattern analysis

Flow Analysis

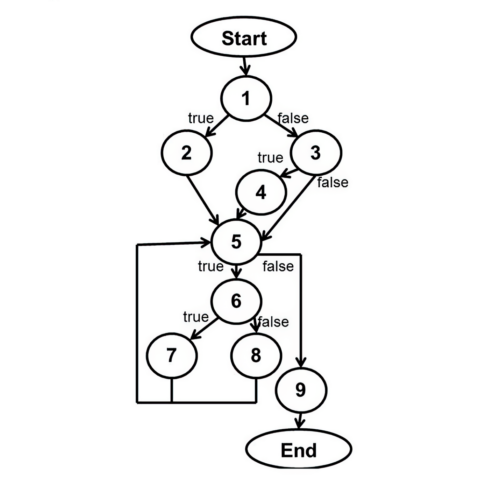

In flow analysis, the tools analyze source code to understand the underlying control flow and data flow of the code.

The result is an intermediate representation, or model, of the application. The tools run rules—or checkers—against that model to identify coding errors that result in security vulnerabilities. For example, in a C or C++ application, a rule may identify string copies, then traverse the model to determine if it is ever possible for the source buffer to be larger than the destination buffer. If it is, a buffer overflow vulnerability could result.

Pattern Analysis

Avoiding certain constructs in code that’s safety-critical is the basis behind modern software engineering standards like AUTOSAR C++14, MISRA C 2023, and Joint Strike Fighter (JSF). These standards prevent the possibility of misinterpreting, misunderstanding, or incorrectly implementing unreliable code.

Pattern analysis helps developers use a safer subset of the development language given the context of safety or security, prohibiting the use of code constructs that allow vulnerabilities to occur in the first place. Some rules can identify errors by checking syntax, like a spell checker in a word processor. Some modern tools can detect subtle patterns associated with poor coding construction.

Advantages of SAST

Each testing methodology has strengths. Many organizations overly focus on DAST and penetration testing. But there are several advantages to using SAST over other testing techniques.

Code Coverage

The amount of code that’s tested is a critical metric for software security. Vulnerabilities can be present in any section of the codebase, and untested portions can leave an application exposed to attacks.

SAST tools, particularly those using pattern analysis rules, can provide much higher code coverage than dynamic techniques or manual processes. They have access to the application source code and application inputs, including hidden ones that are not exposed in the user interface.

Root Cause Analysis

SAST tools promote efficient remediation of vulnerabilities. Static analysis security testing easily identifies the precise line of code that introduces the error. Integrations with developers’ IDE can accelerate remediating errors found by SAST tools.

Skills Improvement

Developers receive immediate feedback on their code when they use SAST tools from the IDE. The data reinforces and educates them on secure coding practices.

Operational Efficiency

Developers use static analysis early in the development lifecycle, including on single files directly from their IDE. Finding errors early in the SDLC greatly reduces the cost of remediation. It prevents bugs in the first place, so developers don’t have to find and fix them later.

How to Get the Most Out of SAST

SAST is a comprehensive testing methodology that does require some initial effort and motivation to adopt it successfully.

Deploy SAST as Early as Possible

While teams can use SAST tools early in the SDLC, some organizations elect to delay analysis until the testing phase. Even though analyzing a more complete application allows for inter-procedural data flow analysis, “shifting left” with SAST and analyzing code directly from the IDE can identify vulnerabilities such as input validation errors. It also enables developers to make simple corrections before submitting code for builds. This helps avoid late-cycle changes for security.

Use SAST With Agile and CI/CD Pipelines

SAST analysis is misunderstood. Many teams think it’s time-consuming because of its deep analysis of the entire project source code. This can lead organizations to believe SAST is incompatible with rapid development methodologies, which is unfounded. Nearly instantaneous results from static analysis security testing are available within the developer’s IDE, providing immediate feedback, and ensuring avoidance of vulnerabilities. Modern SAST tools perform incremental analysis to view results only from the code that changed between two different builds.

Deal With Noisy Results

Traditional static analysis security testing tools often include many “informational” results and low-severity issues around proper coding standards. Modern tools, like those that Parasoft offers, allow users to select which rules/checkers to use and filter results by the severity of the error, hiding those that do not warrant investigation.

Many security standards from OWASP, CWE, CERT, and the like have risk models that help identify the most important vulnerabilities. Your SAST tool should use this information to help you focus on what matters most. Users can filter findings more based on other contextual information like metadata on the project, the age of the code, and the developer or team responsible for the code. Tools like Parasoft provide use of this information with artificial intelligence (AI) and machine learning (ML) to help further determine the most critical issues.

Focus on Developers

Successful deployments are often developer-focused. They provide the tools and guidance developers need to build security into the software. This is important in Agile and DevOps/DevSecOps environments, where rapid feedback is critical to maintaining velocity. IDE integrations allow security testing directly from the developer’s work environment—at the file level, project level, or simply to evaluate the code that changed.

Use Smart Rule Configuration

When analyzing software for security issues, one size does not fit all organizations. It’s critical that the rules/checkers are addressing the specific issues critical to that specific application. Organizations just starting testing for security may wish to limit rules to the most common security issues like cross-site scripting and SQL injection. Other organizations have specific security requirements based on regulations like PCI DSS. Look for solutions that allow controlled rule/checker configuration that fits your specific needs, not a generic configuration.

Integrating Static and Dynamic Analysis for Comprehensive Security Testing

In the case of software security tools, the whole is better than the sum of the parts. This is true of application security testing because the various tools have strengths in different areas and weaknesses that are mitigated by the combination.

Benefits of Integrating SAST and DAST

The combination of SAST and DAST is a natural fit due to the fundamental difference in the technology used in static tools versus dynamic tools. Here are some of the benefits of integrating both into your security testing.

- Better security coverage. SAST tools cover a wide variety of security issues and can enforce secure coding standards. DAST tools often cover more specific errors, some unique to runtime environments, and they catch serious vulnerabilities that were previously undetected during development.

- Early detection. SAST tools excel at detecting vulnerabilities during development as the code is being written. In addition, the enforcement of secure coding standards is critical to preventing poor software practices that lead to later vulnerabilities. Integrating DAST has the added benefit of catching serious vulnerabilities that are missed at these early stages.

- Better results. Overall, the combination of two tools that detect vulnerabilities in different ways is better for security testing. SAST tools, while susceptible to false positives, can detect vulnerabilities that are missed during testing. DAST tools, on the other hand, have a high degree of confidence in their findings, mostly eliminating the issue of false positives.

- Improved collaboration. SAST and DAST tools are often used by different teams in the organization. SAST tools are typically the domain of software developers while software developers, testers, and security experts all use DAST tools. Integrating the various application security tools encourages these teams to share and collaborate on a common set of vulnerabilities to tackle. Early prevention and later runtime detection are the best of both worlds.

- Less compliance workload and risk. Security compliance is a time-consuming and costly undertaking. The risks of non-compliance can be severe. Integrating application security tools together is a best practice that improves an organization’s security posture. These tools also create an automated “paper” trail of security testing activities that demonstrates due diligence. The increased coverage and better results from integrating these tools pay off in reduced security and compliance risk.

Challenges & Best Practices for Integration

Integrating tools, in general, can be challenging. Tools from different vendors may not play well together and the reports from each tool may conflict and be in varying formats. This leads to the following challenges.

- Workflow integration. All development tools, security-related or not, need to be well integrated into existing developer workflows and CI/CD pipelines. Therefore, each individual tool must integrate well into the environment and with each security-related tool. Each tool needs to add value individually as well as in an integrated security toolbox.

- Data integration. Each security tool creates its own set of reports, which may be in incompatible formats. Due to the nature of SAST and DAST tools, for example, there could also be cases where they are reporting on the same vulnerabilities. It’s critical that the data from all the tools be orchestrated in some fashion.

- Training. Tools have different interfaces. Developers and testers need to be trained in using all the tools. They need to learn how to use the tools day-to-day and how to process, remediate, and update the findings of each tool.

- Cost. Security tools have associated licensing fees and require training efforts for each. In addition, there is the cost of integrating the tools together and the time needed to process the reports from them.

There’s excellent ROI for integrating your application security toolbox, so this list shouldn’t discourage the effort. Here are some best practices to help ease the integration:

- Evaluate tool compatibility and coverage before purchase. Before integrating SAST and DAST tools, assess their compatibility and coverage. Consider the programming languages, frameworks, and application types they support. Ensure that the tools complement each other’s strengths and cover a wide range of security vulnerabilities.

- Establish a clear workflow. Outline the steps and responsibilities for using both SAST and DAST tools. This should include when and how each tool is used, who is responsible for executing the tests, and how the results are shared and tracked. In addition, how to use the results from both tools effectively should be defined.

- Automate data integration. Use security orchestration tools or make use of the built-in integration capabilities of the SAST and DAST tools. Establish a common repository or a centralized platform that can aggregate and correlate the results from both tools. Automate the collection of results from both tools to minimize any manual effort and increase efficiency.

- Establish a security training program. For better efficiency and workflow integration, establish a common training program specific to your organization that covers specific security workflows, tools, policies, and practices. This is more effective than piecemeal training for each individual tool. An important aspect of SAST and DAST tool training is how to analyze the results, understand the risks from reported vulnerabilities, and prioritize fixes.

- Look for cost reductions and efficiencies. Some vendors provide both types of tools in an integrated suite, which might be more attractive. Analyze the total cost of ownership versus the potential ROI for the combined solution. Be sure to compare costs associated with tool integration, infrastructure requirements, and maintenance expenses.

- Re-evaluate security tool integrations on a regular basis. Review and update security tool integrations, as tools are improving with every release, as are your security requirements. Keep abreast of new technologies, frameworks, and security threats, as all of this impacts your security workflows and tool usage.

Other Types of Application Security Testing

In addition to SAST and DAST, there are other types of application security testing.

- Interactive application security testing. IAST combines interaction with the program coupled with observation to detect software vulnerabilities. In other words, it monitors the application’s behavior while it’s running and provides continuous feedback on all security issues it discovers.

- Runtime application self-protection. RASP uses runtime technology that’s installed within an application to provide an additional layer of security. This layer monitors the application in real time and can detect and block any attempted exploits or attacks.

- Mobile application security testing. Focused on mobile applications, MAST leverages DAST and SAST plus additional features focused on mobile application security. MAST may also extend to device-specific exploits like jailbreaking, rooting the device, privacy infringement, and data leakage.

- Software composition analysis. SCA inspects container images, source code, binary files, and more in search of open source. The intention is to detect third-party and open-source dependencies and create a software bill of materials (SBOM). A list of all the open source and third-party components present in a codebase. With an SBOM, you can respond quickly to the security, license, and operational risks that come with open source use.

It’s important to note that these are application security testing techniques that fit within an ecosystem of security techniques. For example, teams commonly use application security orchestration tools to organize, analyze, and report on the information from all of these techniques.

Leverage AI & ML to Identify the Most Important Violations

Artificial intelligence (AI) and machine learning (ML) technologies enhance Parasoft’s static analysis solutions to identify hotspots and intersections between all of the found violations. This enables teams to focus efforts on the part of the codebase that is the root cause for many other issues. What’s more, ML monitors and learns from the behavior of your development teams to differentiate between what’s important and what’s not.

Training your AI model based on the historic behavior of the development team provides a multi-dimensional analysis of the findings, while ML clusters data to identify correlated, related, or similar violations.

Combining the two technologies is even better. The combination learns which false-positive results to ignore and which true positives to highlight. It shrinks a mountain of information down to a few, highly valuable diamonds.

For example, static analysis can reveal thousands of violations in a typical codebase. Even though you might be able to identify hundreds of defects to address, you won’t be able to fix everything in the amount of time available. With AI and ML finding violation hotspots, you can fix multiple defects at the same time by identifying the single piece of code that’s causing all of them.

Prevention Is Better Than Detection

Build security into your application. It’s much more effective and efficient than trying to secure an application by bolting security on top of a finished application at the end of the SDLC. Just as you cannot test quality into an application, the same is true for security. SAST is the key to early detection and prevents security vulnerabilities by writing secure code from the start.

SAST tools enable organizations to embrace software security from the early stages of development onward and provide their software engineers with the tools and guidance they need to build secure software.

Unlocking the Value of SAST

“MISRA”, “MISRA C” and the triangle logo are registered trademarks of The MISRA Consortium Limited. ©The MISRA Consortium Limited, 2021. All rights reserved.