Discover TÜV-certified GoogleTest with Agentic AI for C/C++ testing!

Get the Details »

Parasoft Blog

Discover how to monitor code coverage metrics and efficiently identify and address coverage gaps for safety-critical software using C/C++test CT with TÜV SÜD-certified GoogleTest.

Jump to Section

For teams developing safety-critical systems, meeting code coverage mandates isn’t just about running tests—it requires a fundamentally integrated workflow between your unit testing framework, requirements traceability, and coverage tooling.

Unit testing is a fundamental element of the verification and validation (V&V) process for embedded, safety-critical software. All major functional safety standards require objective evidence of testing completeness at the unit level.

This completeness is typically demonstrated through requirements traceability and structural code coverage reports. Consequently, any unit testing framework used in a compliance context must integrate seamlessly with the project’s requirements management system (RMS) and code coverage tooling.

In an ideal development scenario, teams begin with well-defined, unambiguous requirements accompanied by a complete test specification. Implementation proceeds in alignment with these requirements, and code coverage reports only provide objective evidence of verification completeness at the source code level.

Teams rarely achieve this ideal in practice. They often begin implementation and test development with incomplete or evolving requirements.

In some cases, formal requirements may be entirely absent—for example, when V&V and safety processes are applied retrospectively to a legacy codebase that was not originally developed under a functional safety framework.

In such situations, development, requirements definition, and test creation proceed in parallel. This workflow forces teams to:

A coverage gap may indicate dead code, a missing test case, or an incomplete or undefined requirement.

In all cases, the critical task is to understand why a given code construct remains uncovered. For relatively simple metrics such as line coverage, this analysis is often straightforward. However, more advanced criteria like MC/DC (modified condition/decision coverage) can make root cause analysis significantly more challenging.

While this is far from the ideal workflow, in practice the process often begins with identifying a coverage gap, followed by analyzing the missing stimuli required to exercise the uncovered code.

Based on this analysis, developers create a test case to cover the previously untested behavior.

The resulting test case is the basis for improving system understanding and guiding subsequent refinements and requirement definitions.

Well-integrated unit testing tools can support this process by providing details about coverage deficiencies and, in some cases, assisting with generating the initial test cases to drive requirement refinements as described above.

Parasoft C/C++test CT provides a TÜV-certified distribution of GoogleTest along with integrated code coverage reporting, significantly simplifying coverage gap analysis. It also includes AI-based capabilities for automated test case generation.

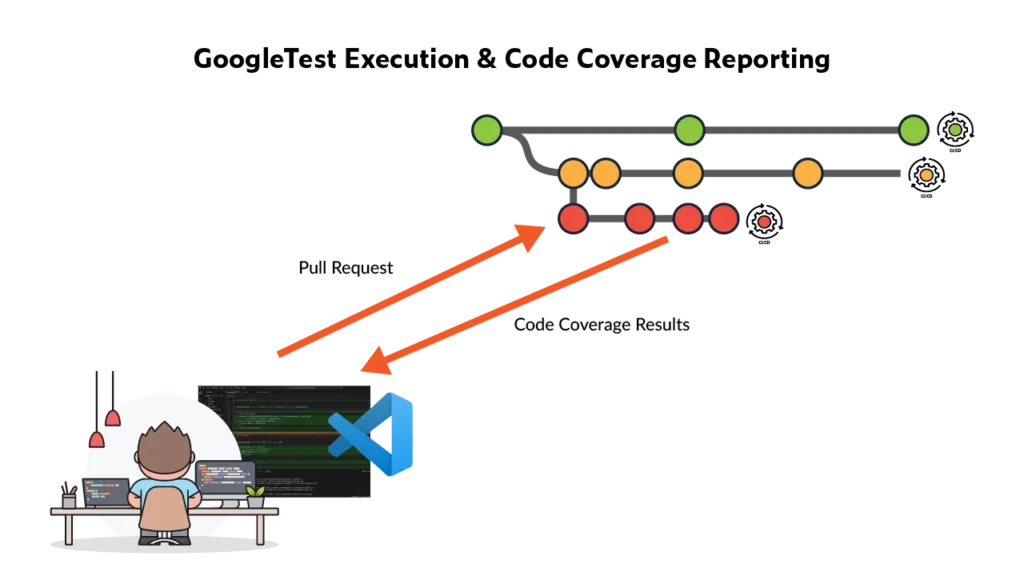

Teams can execute their GoogleTest test suites and collect code coverage both locally and within CI pipelines with C/C++test CT.

Many teams value the ability to run a focused subset of GoogleTest test cases locally prior to committing changes, followed by execution of the full test suite in the CI pipeline. Coverage results can then be retrieved from CI pipelines and analyzed directly within the IDE, enabling an efficient feedback loop. For teams using Visual Studio Code, C/C++test CT provides streamlined support for this workflow.

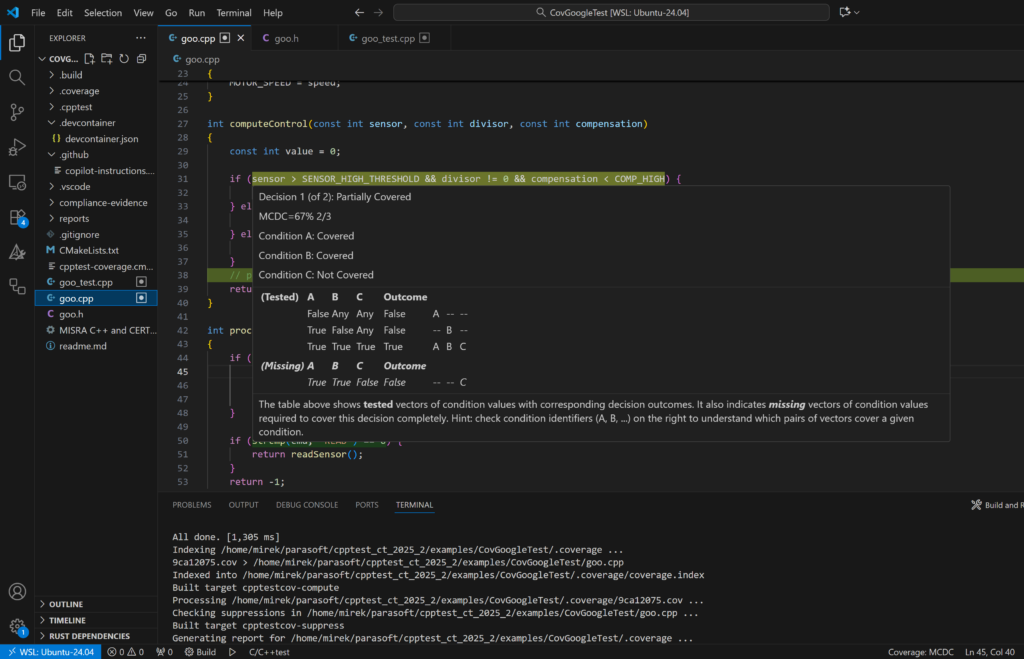

Once coverage reports are available, the next step is gap analysis. C/C++test CT simplifies this process, particularly for more demanding coverage criteria such as MC/DC.

The screenshot below illustrates an example of MC/DC coverage results for a specific decision within a function.

An example of MC/DC coverage results for a specific decision within a function in Parasoft C/C++test CT.

As shown, the results provide a detailed breakdown of covered and uncovered conditions within the decision, along with a table of the precomputed minimal set of test vectors required to achieve full 100% coverage. This minimal set is particularly valuable because it directs developers toward the specific condition combinations that must be exercised to satisfy MC/DC requirements.

Once coverage gaps are understood, the next step is typically to develop one or more test cases that exercise the uncovered code, enabling teams to assess whether updates or refinements to the requirements are necessary. As discussed, while this represents a reactive workflow, it’s a common practice in many projects.

These test cases can be created manually, based on coverage insights provided by C/C++test CT or generated automatically using available tooling support.

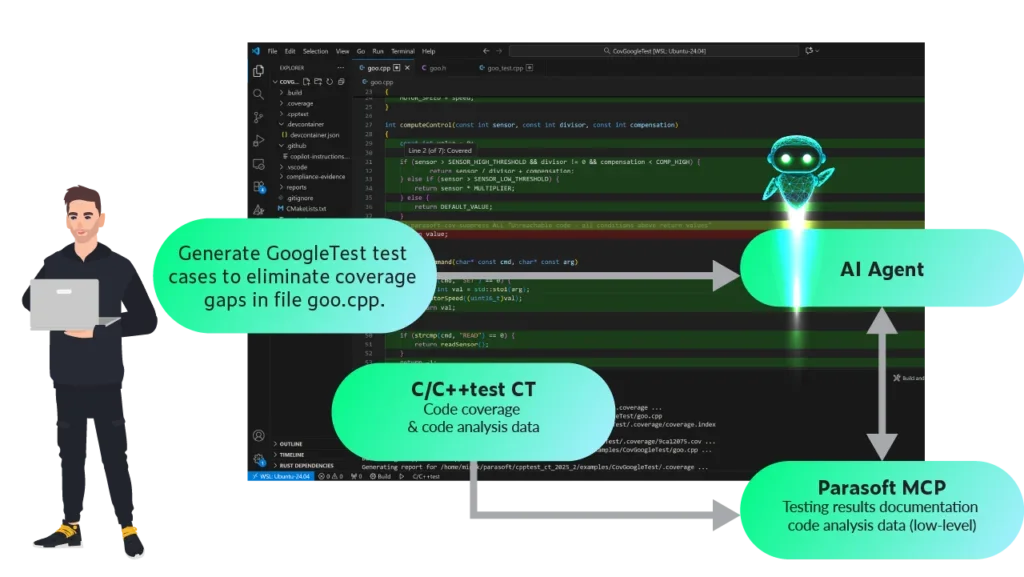

C/C++test CT includes an MCP server that exposes structured coverage data to AI agents. For advanced metrics such as MC/DC, this data includes detailed information about missing test vectors required to fully exercise all conditions within a given decision.

With C/C++test CT’s MCP server, users, among many other queries, can ask the AI agent, "Generate GoogleTest test cases to eliminate coverage gaps in file <>."

The graphic below shows a simplified view of the data flow between the coverage engine, MCP server, and AI agent.

Simplified data flow between the coverage engine, MCP server, and AI agent

Using this structured input, AI agents can automatically generate GoogleTest test cases targeting uncovered scenarios, helping to improve coverage while reducing developer effort. Developers can then review these generated tests and assess their relevance with respect to system behavior and existing or yet-to-be-defined requirements.

Dig deeper: How the MCP server powers agentic development »

By combining a TÜV-certified GoogleTest framework with advanced coverage analysis and AI-powered test generation, C/C++test CT streamlines the path to full code coverage and compliance. It empowers teams developing with modern C++ to identify testing gaps, generate missing tests, and maintain an efficient, scalable verification workflow.

See how your team can achieve its code coverage goals using C/C++test CT integrated with the only TÜV-certified GoogleTest.