Use Agentic AI to generate smarter API tests. In minutes. Learn how >>

How to Obtain 100% Structural Code Coverage of Safety-Critical Systems

Obtaining structural code coverage of systems is one of the crucial things every software developer and quality engineer should know. Read on to understand structural code coverage and why it’s critical to software testing.

Obtaining structural code coverage of systems is one of the crucial things every software developer and quality engineer should know. Read on to understand structural code coverage and why it’s critical to software testing.

Many software development and verification engineers don’t truly understand why obtaining structural coverage is important. Many just do it because it’s mandated by their industry’s functional standard, and don’t take it seriously.

Safety-critical systems like ADAS can transport passengers around without a driver, enable autopilots to fly people across our skies, and keep patients alive with medical devices. People’s lives depend on these systems. It’s vital to obtain structural code coverage. Let’s examine what structural coverage is and more reasons why it’s important.

In a nutshell, structural coverage is the identification of code that has been executed and logged for the purpose of determining if the system has been adequately tested. The thoroughness of the coverage in safety-critical systems depends on the safety integrity level (SIL), ASIL in the automotive industry, and development assurance level (DAL) commonly used in avionics.

By thoroughness, I’m referring to the structural elements in code. In embedded systems these are typically broken down to the code statement, branch, modified condition decisions and you can also drill down to a much finer level of granularity, such as the object code or assembly language.

You might hear or read about other types of coverage metrics like function, call, loop, condition, jump, decision, and so. But for embedded safety-critical systems, all you currently need to know about are statement, branch, MC/DC, and object code. The other types mentioned are a subset and therefore addressed.

There is currently a movement towards adopting Multiple Condition Coverage (MCC), which is more thorough than MC/DC. MCC requires a much greater number of test cases—2 to the power of the number of conditions.

The formula: 2C

Since MCC isn’t officially recommended or mandated by industry process standards ISO 26262, DO-178, IEC 62304, IEC 61508 or EN 50128, I won’t cover it in this blog post.

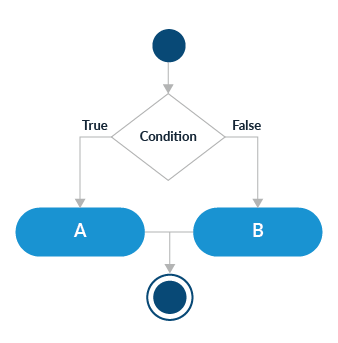

Statement coverage is at the simplest undertaking and represents each line of code in a program. However, code statements can have varying degrees of complexity. For example, a branch statement represents an if then else condition in the code. Statements like case or switch are interpreted as a branch. Still, if you are to obtain coverage for branches, this means that execution of both the true and the false decision paths must be covered.

Branch Flow Chart

Where higher safety levels are of concern, Modified Condition Decision Coverage (MC/DC) may be required. Branches can grow in complexity, where there are multiple conditions in a decision and every condition must be tested independently.

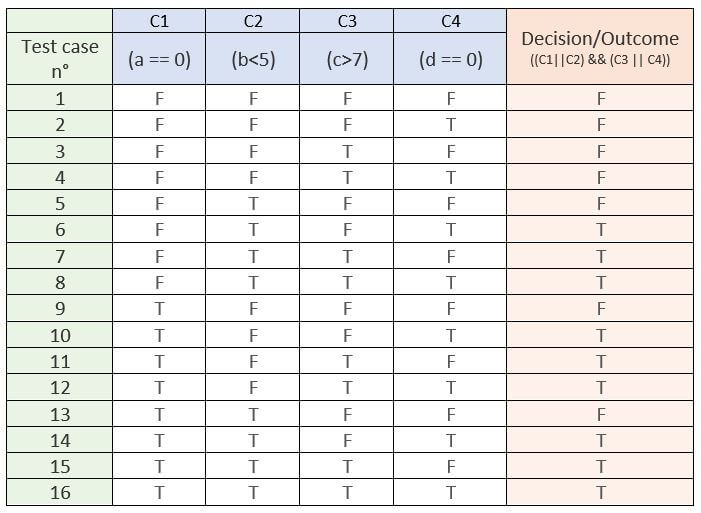

For coverage criteria, this means that every condition in the decision has been shown to independently affect that decision’s outcome. A truth table can be used to help make this analysis as shown below.

Also, every condition in a decision in the program has taken all possible outcomes at least once, and every decision in the program has taken all possible outcomes at least once.

In the example below with 4 condition statements, there are 16 possible test cases. MC/DC requires only 5 for this example. Take the number of conditions and add 1.

The formula: (C + 1)

MC/DC Decision Truth Table for "if (((a == 0) || (b < 5)) && ((c > 7) || (d == 0)))" Statement

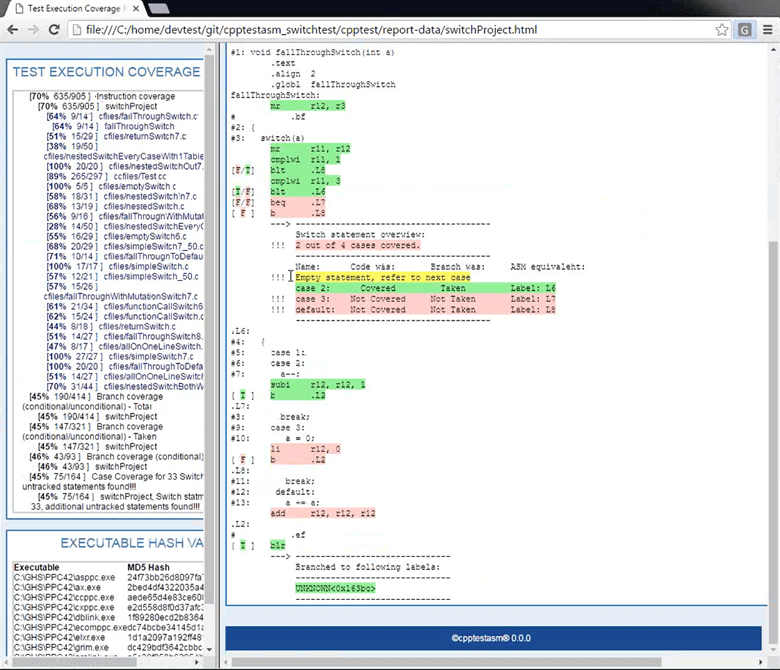

For the most stringent safety-critical applications, such as in avionics, process standard DO-178B/C Level A, mandates Object Code Coverage. This is due to the fact that a compiler, or linker generates additional code that is not directly traceable to source code statements. Therefore, assembly level coverage must be performed.

Imagine the rigor and labor cost of having to perform this task. Fortunately, there’s Parasoft ASMTools, an automated solution on obtaining object code coverage.

Parasoft ASMTools Assembly Language Code Coverage

Code coverage is more often than not, identified through having the code instrumented. Instrumented refers to having the user code adorned with additional code to ascertain during execution if that statement, branch, or MC/CD has been executed.

Based on the embedded target or device, the coverage data can be stored in the file system, written to memory, or sent out through various communication channels, such as the serial port, TCP/IP port, USB and even JTAG.

Be aware that code instrumentation causes code bloat and this increase in code size may impact the ability to load the code onto your memory-constrained target hardware for testing.

The workaround is to instrument part of the code.

Based on your target constraints, hopefully, you will not have too many instrumented partitions to go through. Having to rerun the same tests again and again can be very time-consuming and costly. To quickly mention, there can also be ill timing and performance effects that instrumentation can cause.

Let’s dive into how organizations obtain code coverage for their embedded safety and security-critical systems.

For code coverage requirements, such as a mandated 100% structure, branch, and MC/DC coverage, or an optional and personally desired 80%, there are several testing methods used to meet your goals. The most common methods:

Combining the coverage metrics from these various practices is typical. But how exactly is code coverage identified?

Obtaining code coverage through system testing is an excellent method to determine if enough testing has been performed. The approach is to run all your system tests and then examine what parts of the code have not been exercised.

The unexecuted code implies that there may be need for new test cases to exercise the untouched code where a defect may be lurking, and helps answer the question, have I done enough testing?

When I’ve performed code coverage during system testing, the average resulting metric is 60% coverage. Much of the 40% unexecuted code is due to defensive code in your application.

What I mean by defensive is code that will only execute upon the system entering into a fault or problematic state that may be difficult to produce. Conditions like memory leakage, corruption, or other type of fault caused by hardware failure may take weeks, months, or years to encounter.

There is also defensive code mandated by your coding guidelines that system testing can never execute. For these reasons, system testing cannot take you to 100% structural code coverage. You will need to employ other testing methods like manual and/or unit testing to get you to 100%.

Be aware that process standards allow the merging of coverage metrics obtained from various testing methods.

As mentioned, unit testing can be used as a complementary approach to system testing in obtaining 100% coverage. Obtaining code coverage through unit testing is one of the more popular methods used, but it does not expose whether you have done enough testing of the system because the focus is at the unit level (function/procedure).

The goal here is to create a set of unit test cases that exercise the entire unit at the required coverage compliance need (statement, branch, and MC/DC) in order to reach 100% coverage for that single unit. This is repeated for every unit until the entire code base is covered. However, to get the most out of unit testing, do not solely focus on obtaining code coverage. That can generally be accomplished through sunny-day scenario test cases.

Truly exercise the unit through sunny and rainy day scenarios, ensuring robustness, safety, security, and low-level requirements traceability. Let code coverage be a biproduct of your test cases and fill in coverage where needed.

To help expedite code coverage through unit testing, configurable and automated test case generation capabilities exist in Parasoft C/C++test. Test cases can be automatically generated to test for use of null pointers, min-mid-max ranges, boundary values, and much more. This automation can get you far. In minutes, you’ll obtain a substantial amount of code coverage.

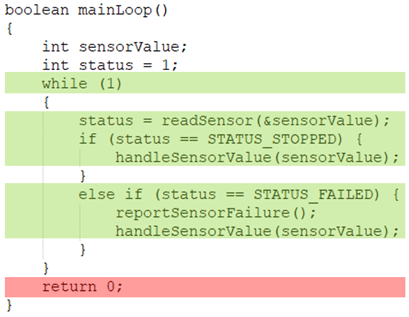

However, as in system testing, obtaining 100% code coverage is elusive due to the use of defensive code or formal language semantics. At the granular level of a unit, defensive code may come in the form of a default statement in a switch. If every possible case in a switch is captured, this leaves the default statement unreachable. In the example below, the return 0; will never get executed because the while (1) is infinite.

Unreachable return 0; Statement

So how does one obtain 100% coverage for these special cases?

Answer: Manual methods need to be deployed.

The user can label or notate the statement as covered by using a debugger, modify the call stack and execute the return 0; statement. Visually witness the execution and at minimum, document the file name, line of code and code statement that is now considered covered.

This coverage performed through manual/visual inspection and reports can be used to supplement the coverage captured through unit testing. The addition of both coverage reports can be used to prove 100% structural code coverage.

If system testing coverage took place and is to be included, all three coverage reports (system, unit, manual) can be used to show and prove 100% coverage and compliance.

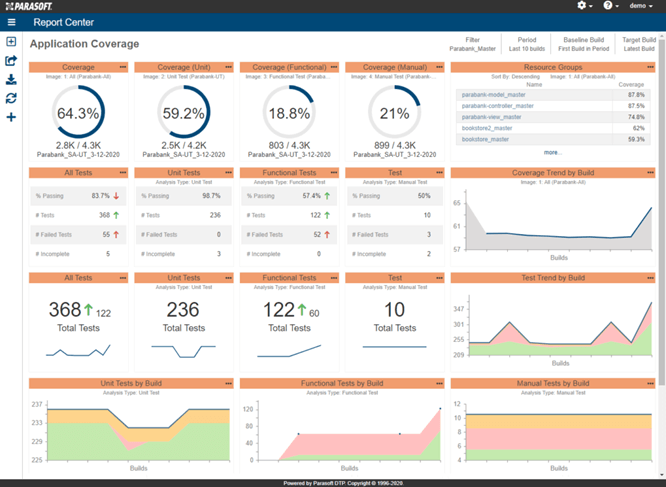

Parasoft DTP Dashboard Code Coverage Reporting Example

Structural code coverage can help answer the question: Have I done enough testing?

It may also be a compliance requirement that you have to satisfy. The goal of obtaining code coverage is an added means to help ensure code safety, security, and reliability. It shows proof that testing has been performed. And through this testing, defects have been identified.

One common defect that code coverage can easily identify, which was not mentioned in the earlier sections, is the uncovering of dead code. Dead code is code that isn’t being called or invoked in any way. It’s code that probably got left behind due to a change in requirements or accidentally forgotten.

Coverage can also be achieved through various testing methods (system, unit, integration, manual, API). The cumulative of coverage from these methods can be combined to prove 100% code coverage.

There are also various levels of coverage (statement, branch, MC/DC, and object code) that you may need to perform where the criteria are based on your SIL, ASIL, or DAL level. Fortunately, Parasoft offers automated software testing solutions and the methods you need to address in obtaining 100% structural code coverage.

See how you can leverage code analysis techniques for your embedded project. Watch the video.