Use Agentic AI to generate smarter API tests. In minutes. Learn how >>

Deliver secure, compliant software using static code analysis solutions that efficiently identify and resolve vulnerabilities to ensure safety and regulatory adherence.

Find bugs early in the SDLC to save time and money on debugging, maintenance, and potential system failures while improving overall software reliability.

Enhance static code analysis workflows with advanced algorithms that intelligently identify problems, prioritize rule violation findings, and simplify remediation steps.

Ensure consistent code quality checks at every stage of the SDLC to minimize errors, accelerate deployments, and increase the efficiency of software delivery.

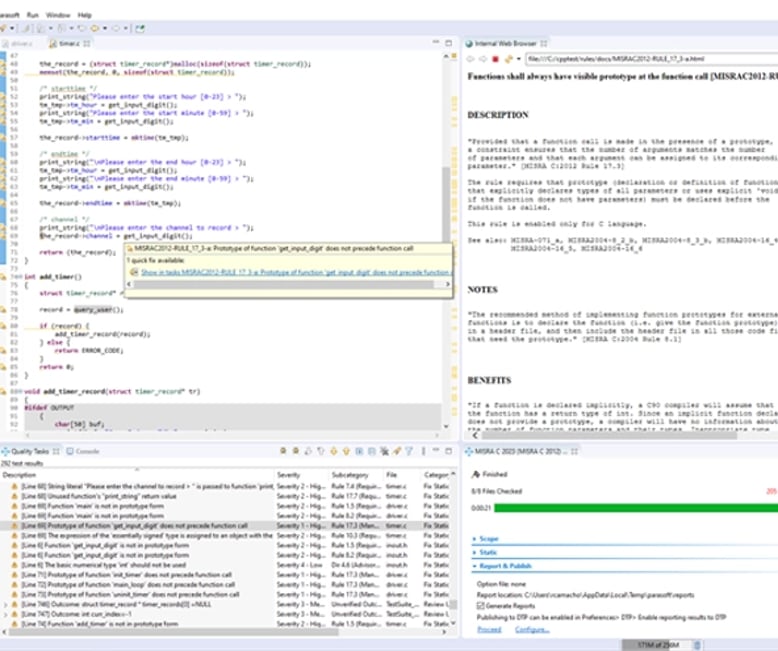

Parasoft’s static analysis solution for C/C++ software development helps teams satisfy regulatory coding compliance requirements in safety, security, and reliability. With easy integration into developers’ IDEs (VS Code, Eclipse) and modern CI/CD development workflows, C/C++test analyzes the codebase, leveraging advanced algorithms to detect:

C/C++test provides comprehensive coverage in identifying critical issues, potential pitfalls, and areas for improvement by utilizing techniques like AI/ML, pattern recognition, rule-based analysis, data and control flow analysis, and metrics analysis.

Teams can customize configurations to fine-tune analyses to align with project-specific requirements or compliance needs with coding standards like MISRA, CERT, AUTOSAR C++ 14, and more.

Once deployed, C/C++test becomes a valuable and integral part of the development workflow. When integrated as part of the CI/CD pipeline for continuous testing, code quality checks occur automatically at every stage of development—from initial code commits to final deployment.

Parasoft’s AI assistant in the VS Code extension offering explains found static analysis violations to developers. To fix the violations, they get code suggestions from the integrated AI-powered assistant GitHub Copilot.

Here’s how our AI and ML static analysis works.

C/C++test automates risk mitigation, optimizes productivity, and elevates the overall quality of software projects.

100%

Achieved compliance for CERT and AUTOSAR C++14.

Reduced

Time to market.

Parasoft Jtest offers comprehensive coverage in standards like OWASP, CWE, CERT, PCI DSS, and DISA ASD STIG, ensuring thorough examination of code for potential defects. Customizable configurations allow teams to tailor the analysis for unique project requirements, enabling precise detection and mitigation of risks specific to an application with a minimum of noise. Use Jtest’s IDE-based Live Static Analysis to automate code scans during active development to identify and address coding flaws as they arise.

Optimized for issue remediation and privacy with patented on-premises AI and ML, Jtest’s static analysis works like this:

Our static analysis solution for Java application development provides a comprehensive set of static analysis checkers and testing techniques that teams can use to perform static code analysis the following ways:

Parasoft’s static analysis provides “accurate analysis and ease of use.”

Daniele De Nicola, Product Software Verification & Validation Supervisor at Leonardo

Increased

Code quality for Java applications.

Reduced

Costs by finding defects earlier.

Our static analysis solution for C# and VB.NET languages provides a comprehensive set of static analysis checkers that teams can use to:

Developers can perform static analysis by integrating Parasoft dotTEST into IDEs, like Visual Studio and VS Code, or using the command-line interface. It also seamlessly integrates into the development pipeline.

Use dotTEST’s Live Static Analysis in the Visual Studio IDE for autonomous code scanning during active development to identify and address coding flaws as they arise.

Teams get access to static analysis results immediately within the IDE and through generated reports (HTML, PDF, XML). They can also view insightful metrics, like number of defects, severity, and location within code on Parasoft’s reporting and analytics dashboard, DTP.

AI-optimized for issue remediation, dotTEST enables developers to remediate static analysis findings quickly through its integration with various LLM (large language model) providers like OpenAI and Azure OpenAI. With LLM integrations, developers can leverage GenAI to assist in situations where they may not be familiar with a specific rule or violation. Our solution provides:

Achieved

Compliance with PCI DSS.

Security

Improved with OWASP and CWE compliance.

Developers publish static analysis results from Parasoft C/C++test, Jtest, or dotTEST into Parasoft DTP, which consolidates the data in intelligent dashboards, detailed reports, and actionable analytics.

Teams can leverage pre-configured dashboards for compliance tracking and reporting to identify where to focus testing and triage efforts to achieve compliance targets.

AI improves each developer’s experience by assisting to prioritize violations. DTP’s interactive widgets show the number of violations from build to build by severity classifications or by the assigned developer. Teams can use DTP’s violation explorer to easily track violations, assign them to specific engineers for remediation, and set priority levels.

Here’s how AI/ML-based analytics streamline static analysis results triaging: